AI Transparency & Explainability: An Essential Guide for 2026

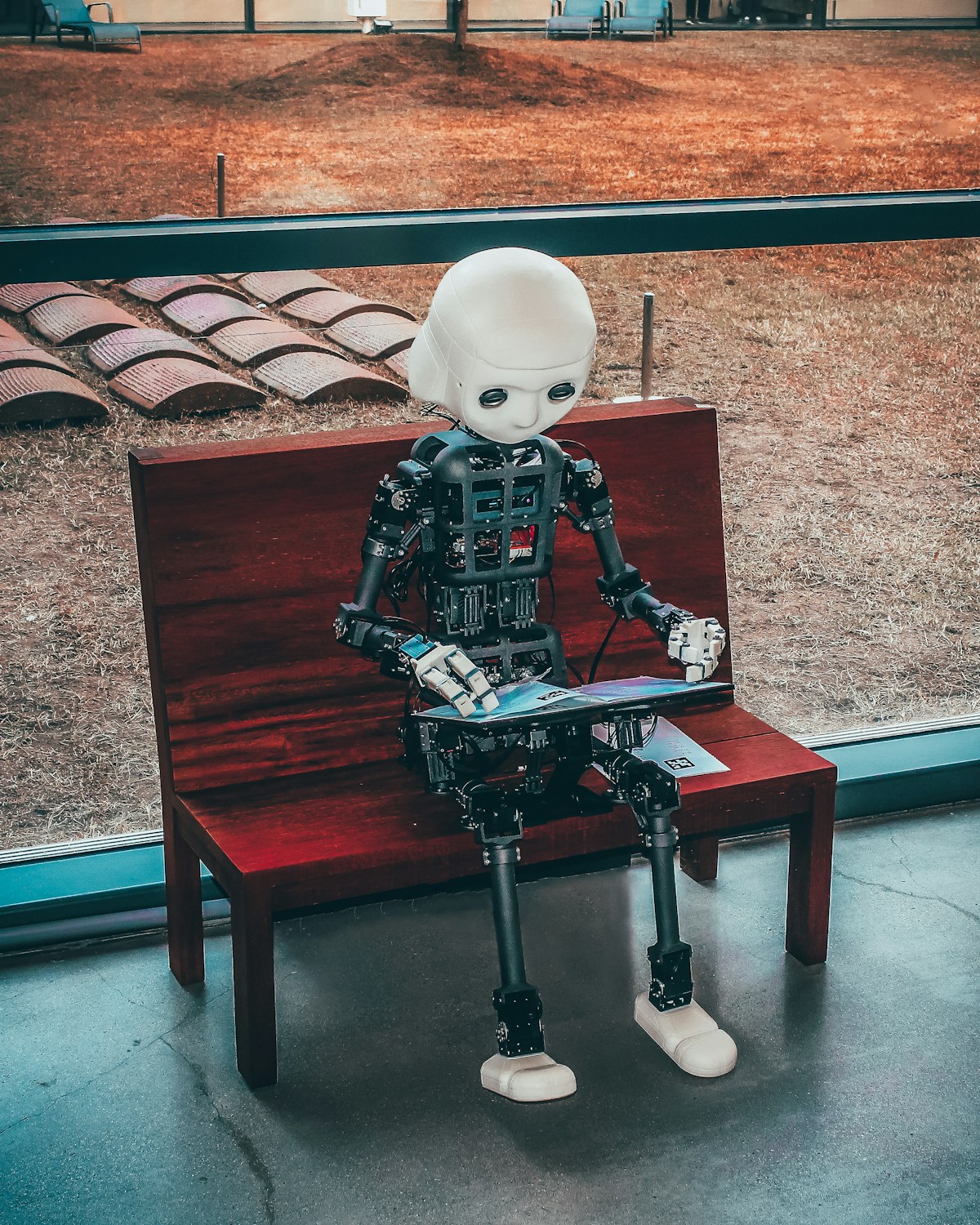

Image credit: Image: Unsplash

AI Transparency & Explainability: An Essential Guide for 2026

As Artificial Intelligence becomes more deeply embedded in critical sectors like healthcare, finance, and justice, the need to understand how and why AI decisions are made has become imperative. In 2026, transparency and explainability (XAI) requirements are not just best practices but increasingly regulatory and ethical demands, shaping the future of AI governance.

What Are AI Transparency and Explainability?

Transparency refers to the ability to understand the internal workings of an AI system, including its training data, algorithms, and decision-making processes. It's about the openness of the system. Explainability, on the other hand, focuses on the ability to communicate the reasons behind a specific decision or prediction in a human-understandable way. It's not enough to know how it works; one must understand why a specific output was generated.

Pillars of XAI Requirements

XAI requirements can be categorized into three main pillars:

- Post-Hoc Explainability: Techniques applied after model training to interpret its decisions. Tools like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) are widely used to explain predictions from complex models, such as neural networks. Adopting these tools is crucial for audits and compliance.

- Inherently Explainable Models: Prioritizing the development of models that are transparent by design, such as decision trees or linear regression models, where appropriate. While not always feasible for complex tasks, this approach minimizes the need for post-hoc techniques.

- Documentation and Governance: Maintaining detailed records about the model's lifecycle, including data sources, preprocessing, algorithmic choices, performance metrics, and bias testing results. Frameworks like the EU's AI Act and NIST guidelines in the US emphasize the importance of robust documentation for accountability.

Challenges and Benefits of Implementation

Key challenges include the trade-off between model complexity and explainability, and the difficulty in standardizing XAI metrics. However, the benefits outweigh the hurdles: increased user trust, regulatory compliance (avoiding fines and sanctions), improved model debugging and maintenance, and the mitigation of biases, fostering fairer and more equitable AI.

Actionable Takeaways for Businesses and Developers

- Integrate XAI by Design: Adopt an

AI Pulse Editorial

Editorial team specialized in artificial intelligence and technology. AI Pulse is a publication dedicated to covering the latest news, trends, and analysis from the world of AI.

Comments (0)

Log in to comment

Log in to commentNo comments yet. Be the first to share your thoughts!