Government AI: Challenges and Solutions for an Ethical Future

Image credit: Image: Unsplash

Government AI: Challenges and Solutions for an Ethical Future

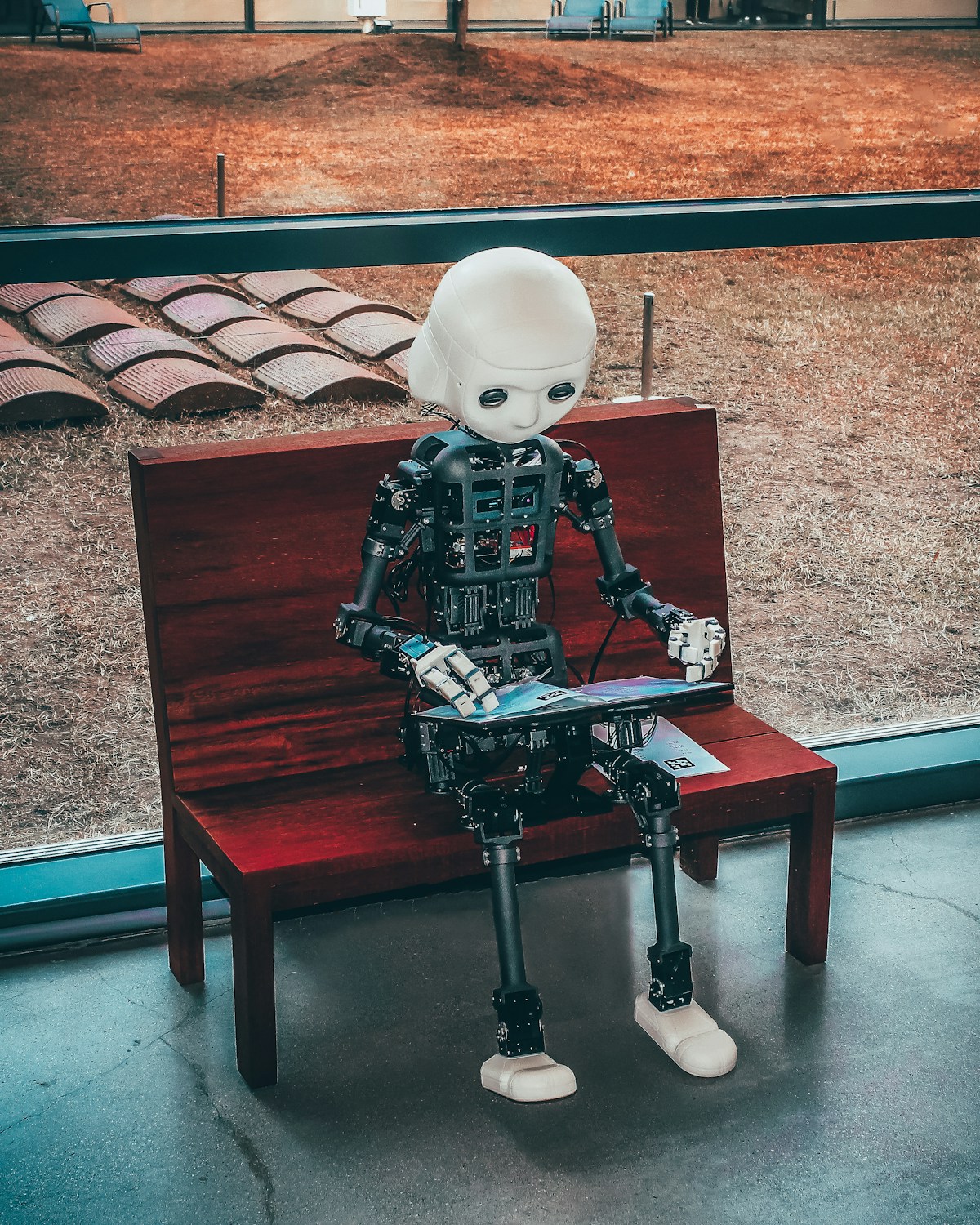

Artificial intelligence (AI) continues to reshape society and the economy at a dizzying pace. As of March 2026, the urgency for robust government AI strategies and policies has never been more apparent. Governments worldwide are navigating a complex terrain, seeking to balance fostering innovation with mitigating ethical, social, and economic risks. The central question is how to create a regulatory environment that promotes AI's advancement responsibly and equitably.

The Challenges of AI Governance

Crafting effective AI policies is fraught with challenges. First, the speed of technological innovation outpaces legislative capacity, rendering regulations quickly obsolete. Second, AI's cross-border nature demands international cooperation, yet fragmented approaches (such as the EU AI Act versus the lighter-touch US approach) hinder harmonization. Third, the technical complexity of AI, including the opacity of certain models (the 'black box' problem), makes it difficult for policymakers to understand and regulate its impacts. Finally, the scarcity of AI talent within the public sector limits the ability to develop and implement informed policies.

Emerging Solutions and Policy Approaches

Despite the challenges, several solutions and policy approaches are gaining traction:

- Risk-Based Regulation: The European Union's AI Act is a prime example, classifying AI systems based on their risk level and imposing proportionate obligations. This approach allows innovation to flourish in low-risk areas while applying rigorous scrutiny to high-risk applications, such as facial recognition in public spaces or credit scoring systems. The UK, conversely, adopts a more sectoral approach, leveraging existing regulators.

- Regulatory Sandboxes and Testbeds: Countries like Singapore and the UK have implemented regulatory sandboxes to allow companies to test new AI technologies in a controlled environment with regulatory oversight. This facilitates understanding AI's practical challenges and informs the development of more flexible and adaptive policies. Initiatives like NIST's 'AI Testbed' in the US also aim to create environments for testing the robustness and security of AI systems.

- Investment in Capacity Building and Research: Governments are investing in training AI specialists within the public sector and promoting research into ethical and safe AI. Grant programs and collaborations with universities and research centers are crucial for building the internal capacity needed for AI governance. The establishment of AI expert advisory boards, such as the White House's AI advisory committee, also provides valuable insights.

- International Collaboration and Standards: The need for global cooperation is paramount. Forums like the OECD, G7, and G20 are working on developing international principles and standards for AI. Collaboration on areas such as data interoperability and AI-related cybersecurity is vital to prevent regulatory fragmentation and foster a globally responsible AI ecosystem.

Conclusion: Navigating AI's Future

AI governance is an ongoing journey, not a destination. Governments must adopt a proactive and adaptive stance, learning from emerging technologies and global experiences. By focusing on risk-based regulation, fostering innovative testing environments, investing in capacity building, and promoting international collaboration, we can build a future where AI serves humanity ethically and beneficially. Success will hinge on the ability to balance innovation with responsibility, ensuring AI is a force for common good.

AI Pulse Editorial

Editorial team specialized in artificial intelligence and technology. AI Pulse is a publication dedicated to covering the latest news, trends, and analysis from the world of AI.

Comments (0)

Log in to comment

Log in to commentNo comments yet. Be the first to share your thoughts!