Multimodal AI: The Future of Intelligent Interaction in 2026

Image credit: Image: Unsplash

Multimodal AI: The Future of Intelligent Interaction in 2026

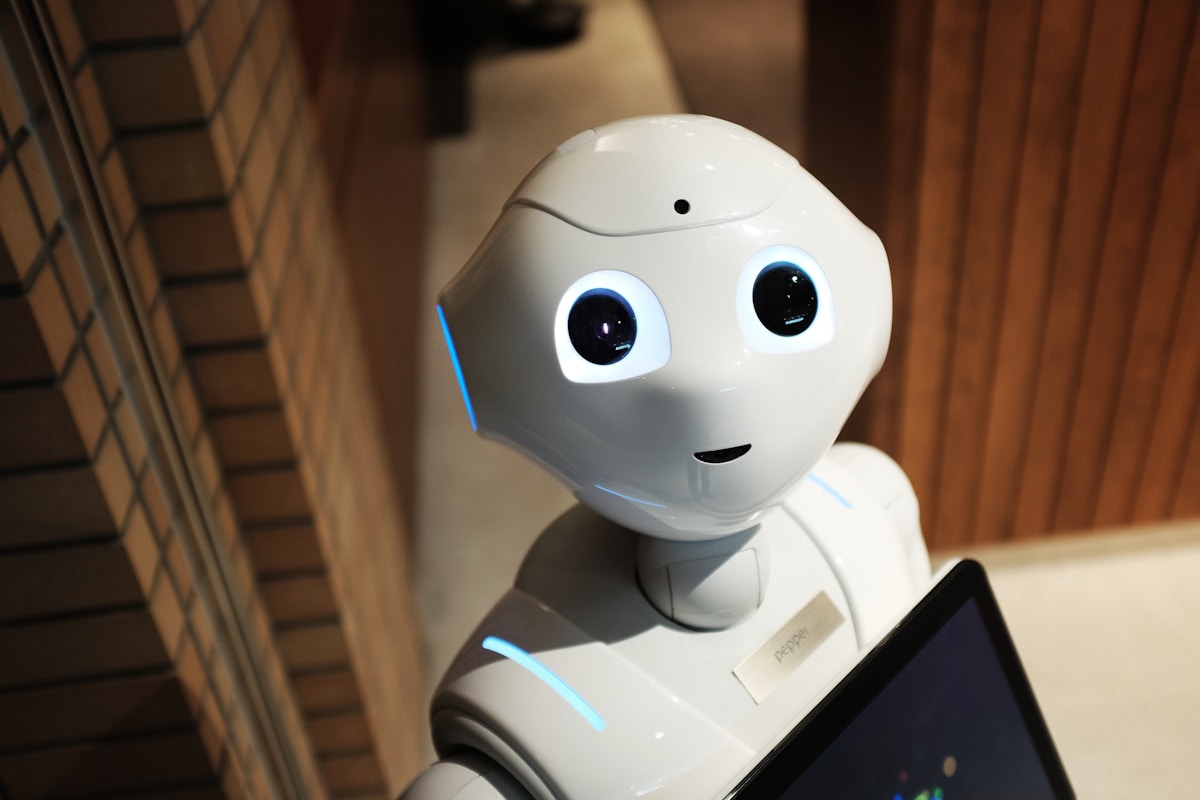

Multimodal artificial intelligence, enabling systems to process and interlink information from multiple modalities (such as text, image, audio, and video), is at the epicenter of innovation in 2026. Far from being a mere academic curiosity, research in this area is paving the way for truly contextual and interactive AI, capable of understanding the world more holistically, akin to human cognition.

Convergence of Foundation Models and Multimodality

One of the most prominent trends is the fusion of large foundation models (LLMs, VLMs) with intrinsic multimodal capabilities. Companies like Google DeepMind and OpenAI continue to lead with architectures that not only generate text or images but also reason about them in conjunction. We anticipate that models like Gemini and successors to GPT-4V will become the norm, offering cross-modal understanding and generation with greater coherence and nuance. Research focuses on optimizing unified encoders and decoders for different data types, reducing latency and computational cost.

Enhanced Human-Machine Interaction

The future of multimodal AI promises to revolutionize human-machine interaction. More sophisticated virtual assistants, capable of interpreting not only voice commands but also facial expressions, gestures, and even tone of voice, are becoming a reality. This translates into more intuitive and empathetic user interfaces, crucial for applications in healthcare, education, and customer service. Research into interpreting emotions and intentions from multimodal signals is fundamental here, with advancements in graph neural networks and cross-attention models.

Practical Applications and Ethical Challenges

The practical applications of multimodal AI are vast. In medicine, systems can analyze medical images (X-rays, MRIs), patient histories (text), and biosensor data (signals) for more accurate diagnoses. In robotics, multimodal perception allows robots to navigate and interact with complex environments more autonomously and safely. However, ethical and security challenges persist, including the privacy of sensitive multimodal data and the risk of algorithmic bias amplified by data complexity. The interpretability of multimodal models is a critical research area to ensure trust and accountability.

Conclusion and Future Outlook

In 2026, multimodal AI is rapidly maturing, moving from laboratory experiments to real-world implementations. Research will continue to focus on computational efficiency, robustness against noisy data, and the ability to abstractly reason about multimodal information. The next generation of multimodal systems is expected not only to process data but also to learn to learn more autonomously, adapting to new scenarios with minimal human intervention. For developers and researchers, the focus must be on creating architectures that prioritize interpretability and ethics, ensuring that the power of multimodal AI is used for the benefit of all.

AI Pulse Editorial

Editorial team specialized in artificial intelligence and technology. AI Pulse is a publication dedicated to covering the latest news, trends, and analysis from the world of AI.

Comments (0)

Log in to comment

Log in to commentNo comments yet. Be the first to share your thoughts!