Technological Optimism and Prudent Fear: Debating AI's Future

Image credit: Imagem: Import AI Newsletter

The Incessant March of Artificial Intelligence Progress

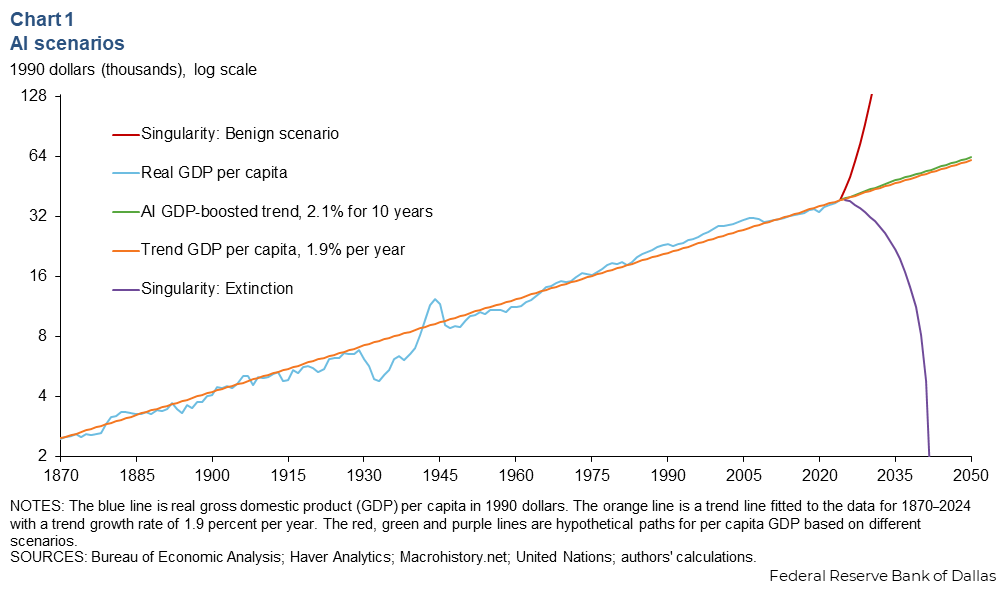

The pace of artificial intelligence (AI) development has been nothing short of remarkable, transforming industries and redefining what's possible. From virtual assistants to advanced medical diagnostic systems, AI is increasingly integrated into our daily lives, promising unprecedented efficiencies and solutions to complex challenges. However, this rapid evolution also raises profound questions about its long-term impact on society and the global economy.

Experts and thought leaders worldwide are constantly evaluating AI's trajectory. Recent reports, such as those from Stanford HAI, highlight not only technical progress but also growing concerns regarding governance and safety. The discussion is no longer if AI will continue to advance, but how we should prepare for this continuous progression.

The Duality of Optimism and Caution

The AI community and the general public are engaged in a polarized debate. On one hand, there's palpable optimism, fueled by AI's promise to solve intractable problems, from drug discovery to optimizing energy grids. Companies like Google DeepMind continue to demonstrate impressive capabilities in areas such as biology and materials science, fostering the belief that AI can be a force for global good.

On the other hand, there's growing caution, or even fear, regarding potential risks. Concerns about job displacement, data privacy, algorithmic bias, and even more extreme scenarios of loss of control are frequently raised. The need for robust regulation and clear ethical guidelines is a recurring theme, seeking to balance innovation with societal protection. For more insights on how businesses are navigating these challenges, visit our section on enterprise AI [blocked].

Navigating the Future: Governance and Responsibility

As AI becomes more powerful and autonomous, the question of governance becomes paramount. It's not just about creating intelligent systems but ensuring these systems are developed and deployed responsibly. This involves collaboration among governments, technology companies, academia, and civil society to establish standards and frameworks that can guide AI development beneficially.

Global initiatives, such as UNESCO's Recommendation on the Ethics of AI, aim to provide a framework for ethical AI development. The responsibility falls on all stakeholders to foster a culture of transparency, explainability, and accountability. The discussion around AI safety, for instance, is an active research field seeking to mitigate risks before they materialize on a large scale.

Why It Matters

This debate between optimism and fear is crucial because it will shape the policies, investment, and public acceptance of artificial intelligence. A balanced approach is essential to harness AI's vast potential while safeguarding human values and preventing unintended consequences, ensuring technological progress serves humanity sustainably and equitably.

This article was inspired by content originally published on Import AI Newsletter by Jack Clark. AI Pulse rewrites and expands AI news with additional analysis and context.

AI Pulse Editorial

Editorial team specialized in artificial intelligence and technology. AI Pulse is a publication dedicated to covering the latest news, trends, and analysis from the world of AI.

Comments (0)

Log in to comment

Log in to commentNo comments yet. Be the first to share your thoughts!