Anthropic's Claude Cowork: Complex Automation, Inherent Risks

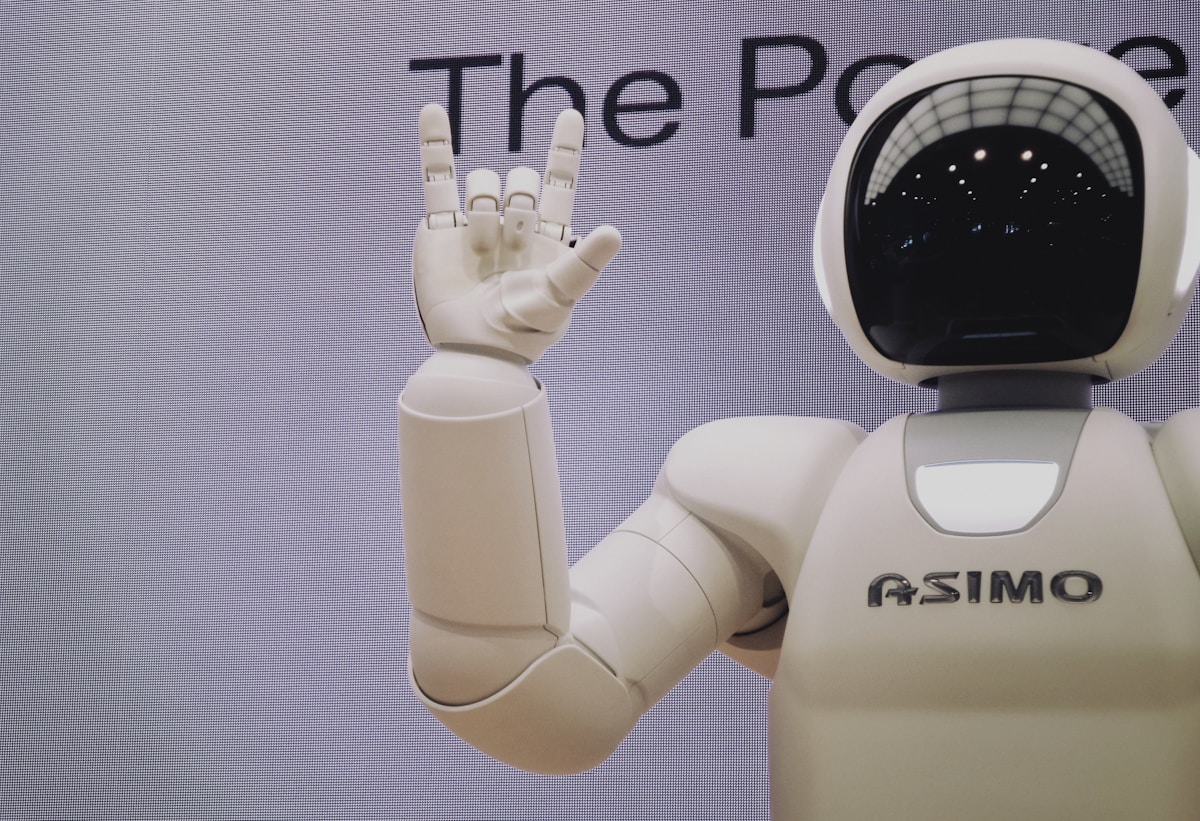

Image credit: Photo by Franck V. on Unsplash

The Rise of AI Agent Automation

Anthropic, a leading artificial intelligence company, has introduced Claude Cowork, an innovative feature enabling its advanced language model, Claude Max, to automate complex task sequences. Initially available as a research preview for subscribers, Cowork marks a significant step towards more autonomous AI agents capable of interacting with various tools and platforms to execute multifaceted objectives. This capability extends beyond traditional chat interactions, aiming to transform Claude into a proactive assistant that can, for instance, browse the web, analyze data, and even interact with APIs to complete projects.

The idea behind Cowork is to mimic how a human would coordinate multiple tools to achieve a goal, but with the speed and scale of AI. Anthropic's vision for AI has consistently emphasized safety and interpretability, and Cowork reflects this approach, even with the inherent challenges of autonomy.

How Claude Cowork Functions and Its Risks

Claude Cowork allows the AI model to make chained decisions, leveraging a suite of tools and contextual understanding to navigate tasks. For example, a user might ask Claude to “research the latest AI trends and generate a report,” and the system would, in theory, break this down into sub-tasks like web searching, summarization, and formatting. The company stresses that while powerful, this is a research-phase feature, and users should proceed with caution. Anthropic itself warns of security, privacy, and the potential for the model to “hallucinate” or take unintended actions.

This scenario raises important questions about the governance and control of AI agents. A model's ability to interact with external systems, such as email accounts or software platforms, demands a level of trust and oversight that is still being defined. The use of AI tools for enterprise automation is a growing topic, and you can learn more about it in our enterprise AI [blocked] section.

Implications for the Future of AI Automation

The advent of features like Claude Cowork signals a paradigm shift in how we interact with AI. We are moving towards a future where AI assistants not only answer questions but also act on our behalf, executing complex, end-to-end processes. This has vast implications for personal and business productivity, potentially freeing up human time for more creative and strategic tasks.

However, the need for human oversight and risk mitigation remains crucial. AI safety research, such as that conducted by institutions like MIT CSAIL, is more relevant than ever. As models become more capable, the responsibility to ensure their actions align with human intentions and ethical values becomes paramount. Developers and users will need to collaborate to establish best practices and safeguards for these advanced systems. You can also compare AI tools [blocked] to see how different platforms approach these challenges.

Why It Matters

The launch of Claude Cowork is a significant milestone in the evolution of AI agents, demonstrating the potential for complex task automation and productivity transformation. However, it also highlights the increasing need to proactively address security, privacy, and control challenges as AI becomes more autonomous and integrated into our daily workflows. How we manage these risks will determine the success and widespread adoption of this new generation of AI tools.

This article was inspired by content originally published on ZDNet. AI Pulse rewrites and expands AI news with additional analysis and context.

AI Pulse Editorial

Editorial team specialized in artificial intelligence and technology. AI Pulse is a publication dedicated to covering the latest news, trends, and analysis from the world of AI.

Comments (0)

Log in to comment

Log in to commentNo comments yet. Be the first to share your thoughts!