RL Best Practices: Optimizing Training and Performance

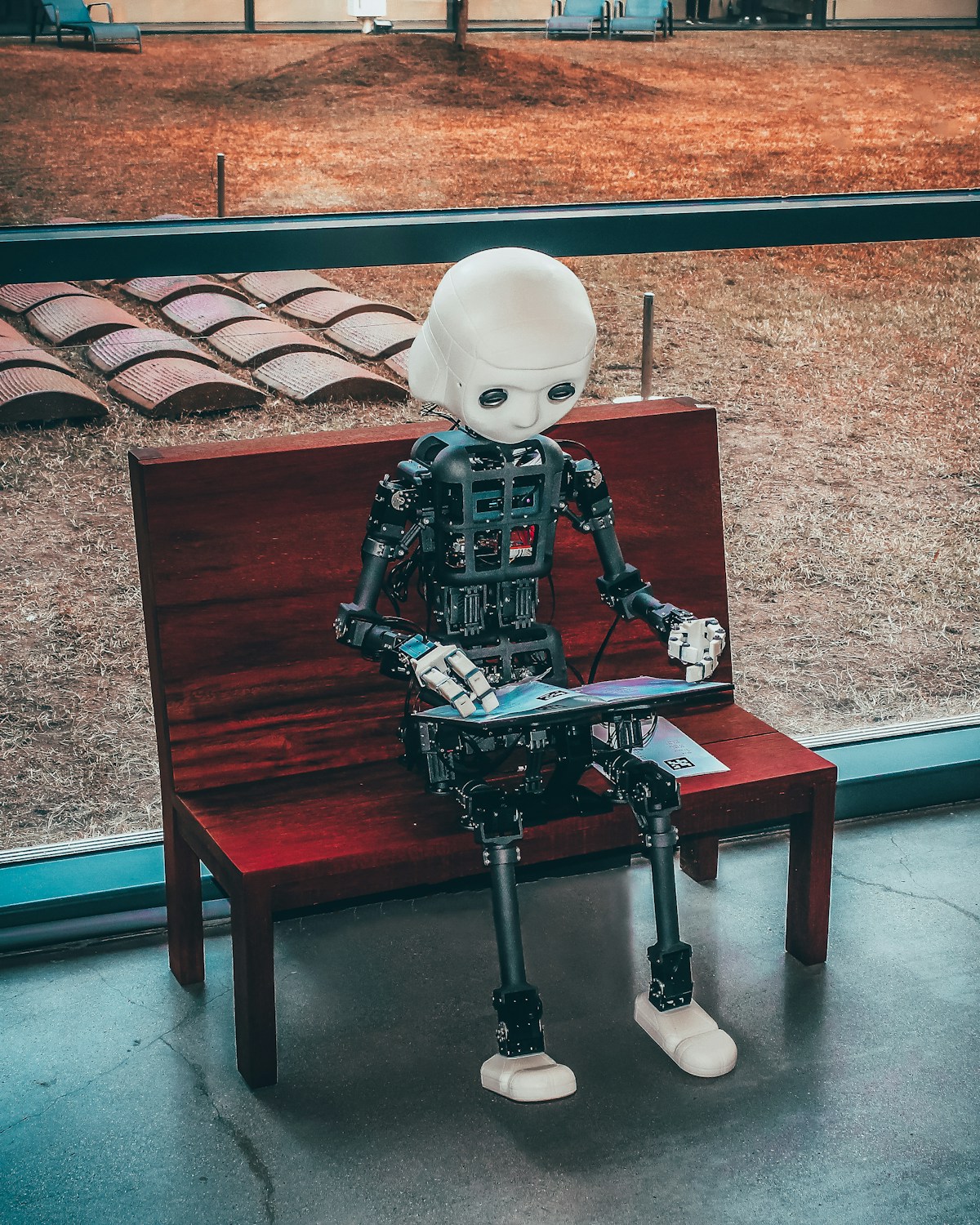

Image credit: Image: Unsplash

Reinforcement Learning Best Practices: Optimizing Training and Performance

Reinforcement Learning (RL) has solidified its position as a driving force in artificial intelligence, enabling agents to learn optimal decision-making in complex environments. From DeepMind's breakthroughs with AlphaGo to applications in robotics and system optimization, RL continues to evolve rapidly. However, developing robust and efficient RL agents necessitates adherence to a set of best practices to overcome challenges such as training instability and sample inefficiency.

1. Reward Engineering and Environment Modeling

The quality of the reward function and the fidelity of the environment model are paramount. A well-designed reward function should be sparse enough to avoid reward hacking but dense enough to guide the agent. Reward shaping can accelerate learning but must be applied cautiously to prevent unintended biases. Accurate simulation environments, such as those offered by platforms like NVIDIA's Isaac Gym or MuJoCo, are indispensable for initial training, allowing safe exploration and efficient data collection. Sim-to-real transfer requires strategies like domain randomization.

2. Algorithm Selection and Hyperparameter Optimization

The choice of RL algorithm significantly impacts performance. For continuous tasks, algorithms like PPO (Proximal Policy Optimization) and SAC (Soft Actor-Critic) are often favored for their stability and sample efficiency, respectively. In contrast, Q-learning or DQN might be more suitable for discrete action spaces. Hyperparameter optimization, including learning rate, discount factor, and neural network architecture, is vital. Tools such as Ray Tune or Optuna facilitate automated search and cross-validation, reducing experimentation time and improving robustness.

3. Training Stability and Sample Efficiency

Addressing training instability is crucial. Techniques like experience replay buffers (used in DQN) and target networks (also in DQN) stabilize the learning process by decoupling data collection from network updates. For on-policy algorithms, collecting multiple episodes before each update (mini-batching) and advantage normalization can improve stability. Sample efficiency, i.e., the ability to learn from fewer environment interactions, is an active research area. Methods like model-based RL (e.g., PlaNet, Dreamer) or offline RL (e.g., CQL, IQL) promise significant advancements, enabling learning from pre-existing datasets or predictive models.

Conclusion

Successful development of RL systems demands a methodical approach that integrates careful reward engineering, accurate environment modeling, informed algorithm selection, and advanced techniques to ensure training stability and efficiency. By adhering to these best practices, researchers and engineers can accelerate RL's progress, unlocking its potential in increasingly complex and critical domains, from industrial robotics to drug discovery.

AI Pulse Editorial

Editorial team specialized in artificial intelligence and technology. AI Pulse is a publication dedicated to covering the latest news, trends, and analysis from the world of AI.

Comments (0)

Log in to comment

Log in to commentNo comments yet. Be the first to share your thoughts!